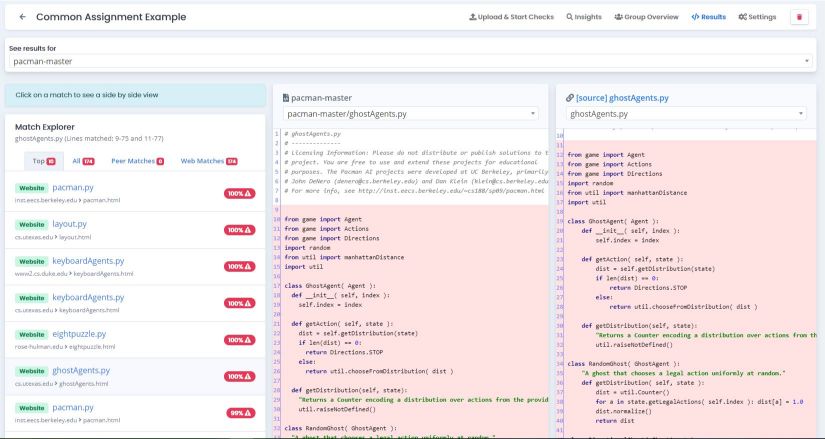

Timestamps of each code save for best match between the two students.By default this list is restricted to just those that include the final submission from at least one of the students. If you click on an entry in the list of generated reports, you will get a list of all comparisons between two students' code. Note that there is no setting that will guarantee detection of plagiarism: the tool will identify similar documents according to the parameters, but the user must judge through manual inspection whether this is due to plagiarism. You should also consult the winnowing algorithm paper to properly understand the function of each parameter. These parameters are very specific to the implementation, and we recommend that you keep to the default values unless you have good reason to do otherwise. Historical docs syntactically invalid normalised size threshold defaults to 130.Historical docs syntactically valid normalised size threshold defaults to 10.Final docs common fingerprint size threshold defaults to 10.Final docs common fingerprint %age threshold defaults to 0.75.Final docs fingerprint T threshold (window = T − K + 1) (default 10) is the guarantee threshold t.Final docs fingerprint K-gram size (default 5) is the noise threshold k.There are 6 parameters for the tool that can be altered from their defaults prior to creating a new report. When ready, the status will change to "Success" in the list of generated reports. Click "Create" to generate a new plagiarism reportĭepending upon the number of comparisons required, this report may take a few minutes to complete.Click the "Plagiarism Detection" button for the problem.Locate the problem your are interested in.In your course admin area, select your course and then click the "Student Submissions" button.Our tool generates reports for all submissions to a specific problem in a course. Read-only files in the workspace are also excluded from comparison to avoid trivial similarities. We handle this by allowing suchīootstrap code to be specified to the similarity checker so that it can be ignored Incomplete/invalid codeįor some Grok problems the student is provided with some initial scaffolding/skeleton code to get them started, which would lead to all code having some base level of similarity.

The tool uses our own implementation of the k-gram winnowing algorithm used by MOSS, as described in the SIGMOD 2003 paper.

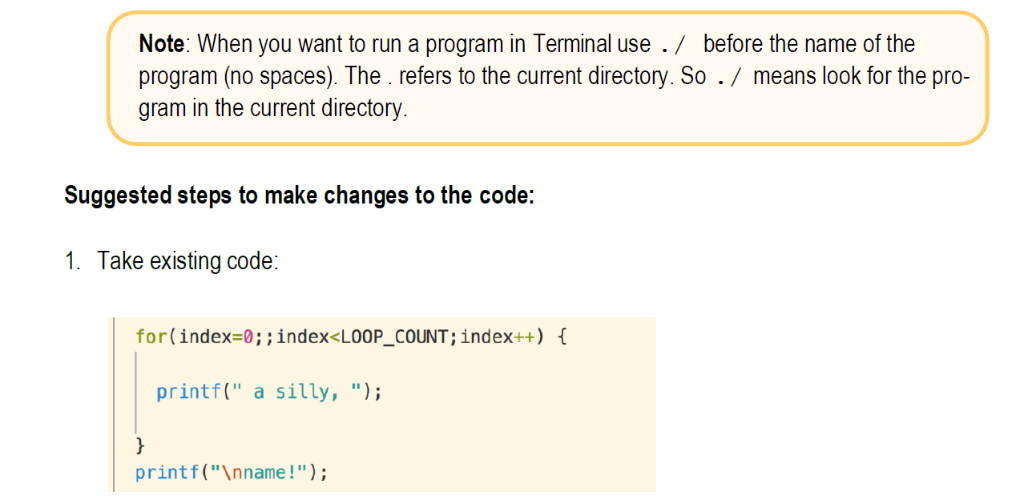

A comparison algorithm is applied to the documents to provide a measure of similarity.(If the language has appropriate support) the documents are normalised to remove unimportant differences such as white-space and variable names then.The files in the workspace are combined together to form a single document then.In order to compare two workspaces, the following steps are performed: This rest of this page explains how to use our internal tool. See the submission export documentation for more details. Comparisons are restricted to the final submissions by students, but the source-code normalisation and syntactic analysis is available for a much wider range of languages. Our submission export facility provides Perl scripts to automate the process of uploading exported submissions to the official Stanford MOSS site for analysis.For some programming languages (including Python), source-code normalisation and syntactic analysis is used to overcome simple changes made by students to mask their reuse of code. It can be run across each student's entire code history for a problem, allowing common code-sharing tactics to be spotted. Our internal tool uses our own implementation of the similarity checking algorithm used by MOSS.Both make use of the approach used by MOSS, a widely used software similarity detection tool. Grok provides two mechanisms to support plagiarism detection within students' code. How do I use Grok's plagiarism detection system?

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed